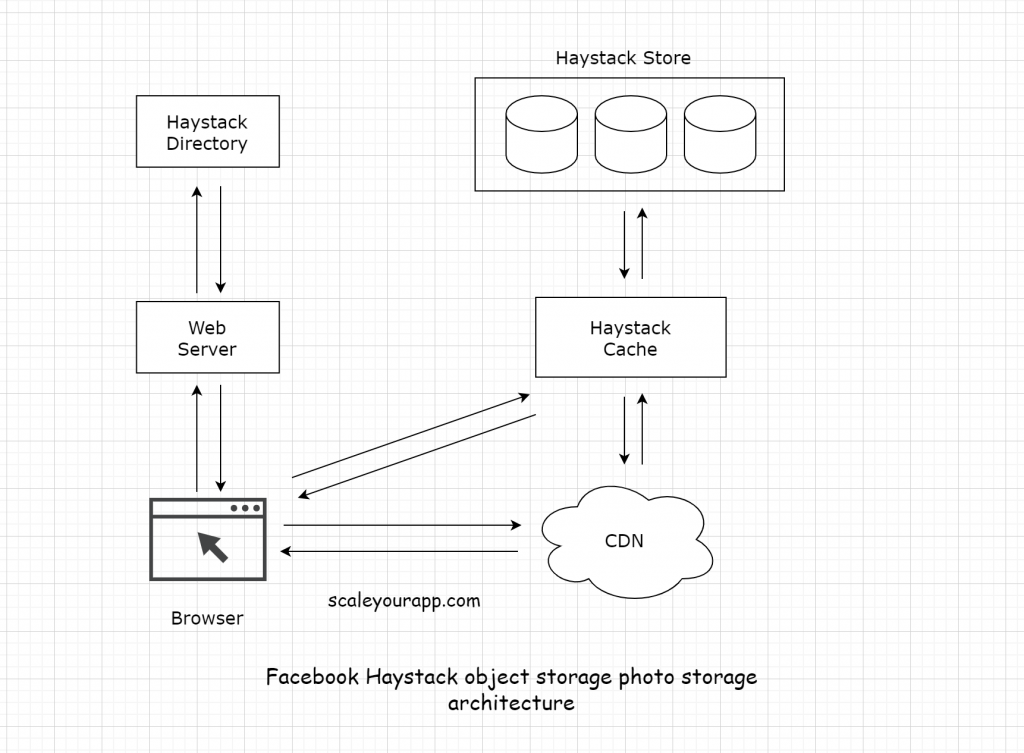

Facebook photo storage architecture

Facebook built Haystack, an object storage system designed for storing photos on a large scale. The platform stores over 260 billion images which amounts to over 20 petabytes of data. One billion new photos are uploaded each week which is approx—60 terabytes of data. At peak, the platform serves over one million images per second.

In the original NAS-based photo storage architecture, Facebook faced throughput and latency issues as the photos and the associated metadata lookups in NAS caused excessive disk operations almost upto ten just for retrieving a single image.

Tail latency in distributed systems

Tail latency is that tiny percentage of responses from a system that are the slowest in comparison to most of the responses. They are often called as the 98th or 99th percentile response times. This may seem insignificant at first but for large applications like LinkedIn, this has noticeable effects. This could mean that for a page having a million views per day 10,000 of those page views would experience the delay. Read how LinkedIn deals with longtail network latencies.

There can be multiple causes of tail latency: increasing load on the system, complex and distributed systems, application bottlenecks, slow network, slow disk access and more. Read more on it.

RobinHood: Tail latency-aware caching

RobinHood is a research caching system for application servers in large distributed systems having diverse backends. The cache system dynamically partitions the cache space between different backend services and continuously optimizes the partition sizes.

Microsoft research has a talk on getting rid of long-tail latencies.

Distributed Systems and Scalability Feed

Distributed Systems and Scalability Feed